How IBM Is Building A Business Around Watson

- by 7wData

In 2004, Charles Lickel was eating in a dinner with some colleagues when he noticed that all of the patrons were rushing to the bar. Curious, he followed them to see what all the commotion was about. As it turned out, they were going to see Ken Jennings’ historic six-month run on the game show, Jeopardy!

He was transfixed. Paul Horn, then director of IBM Research, had been bugging Lickel to come up with an idea for the company’s next “grand challenge,” Big Blue’s tradition of tackling incredibly tough problems just to see if they can be solved. The last one drew wide attention when the firm’s Deep Blue computer beat Garry Kasparov at chess in 1996.

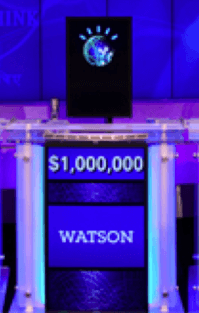

The rest, as they say, is history. Seven years later, in 2011, IBM’s Watson beat Jennings and another Jeopardy! champion,Brad Rutter. Today, Watson has become much more than a clever parlor trick, but a potentially huge line of business for IBM. CEO Ginni Rometty expects it to becomethe heart of Big Blue’s future plans. Yet there are still challenges ahead.

The field of artificial intelligence got its start at a conference at Dartmouth in 1956. Optimism ran high and it was believed that machines would be able to do the work of humans within 20 years. Alas, it was not to be. By the 1970’s, funding dried up and the technology entered the period known as the AI winter.

Slowly, however, progress was made and by 1992, interest in artificial intelligence revived somewhat. The US government began hosting a series of conferences which posed challenges for question answering or “QA” systems. IBM took part in these conferences and began making advances in a range of techniques.

In the beginning, the researchers experimented with rule-based systems, similar to Doug Lenat’s Cyc project that would answer questions based on information provided by human experts, almost the way an encyclopedia works. However, they soon found that those types of systems don’t scale beyond a certain point.

So they began exploring different techniques that more closely resembled how the human brain takes in information, processes it and makes decisions. For example, deep parser techniques break down sentences into component parts of speech, while support vector machines can mine large amounts of data, learn and begin to draw some conclusions.

Up to that point though, these were isolated projects worked on by separate teams. What Lickel saw that night in the bar was an opportunity to weave it all into a coherent system. “The Jeopardy Grand Challenge problem allowed us to pool all of this disparate work we were doing and focus our energies to see if we could solve a really big problem,” says Eric Brown, who worked on the project and is currently a Director within the Watson division.

Jeopardy! presents a unique challenge for an artificial intelligence system. First, it covers an impossibly wide variety of topics, so you can’t just train the system to operate within a single domain. The clues are also in complex language, and contain puns and cultural references, which often obscure the meaning of what is actually being asked.

To answer the first clue correctly, “Who is Charles Dickens?” you would have to realize that “struck England” refers to a birth date and that “Hard times” refers to one of Dickens’ books. To answer the second one, “What is Narnia” you would have to realize that it is a fictional, not an actual geography that is being referred to.

There are other aspects of the game that increase the difficulty even further. For example, you get penalized for wrong answers, so you have to not only come up with a viable response, but also gauge what confidence you have that you have the right answer. There are also time constraints, so you need to be able to respond in just a few seconds at most.

[Social9_Share class=”s9-widget-wrapper”]

Upcoming Events

Evolving Your Data Architecture for Trustworthy Generative AI

18 April 2024

5 PM CET – 6 PM CET

Read MoreShift Difficult Problems Left with Graph Analysis on Streaming Data

29 April 2024

12 PM ET – 1 PM ET

Read More