How to Become a Whistleblower: from Panama Papers to Open Data

- by 7wData

We are at a point in the digital age where corruption is increasingly difficult to hide. Information leaks are abundant and shocking.

We rely on whistleblowers for many of these leaks. They have access to confidential information that’s impossible to obtain elsewhere. However, we also live in a time where data is more open and accessible than at any other point in history. With the rise of Open Data, people can no longer shred away their misdeeds. Nothing is ever truly deleted from the internet.

It might surprise you how many insights into corruption and graft are hiding in plain sight through openly available information. The only barriers are clunky websites, inexperience in data extraction, and unfamiliarity with data analysis tools.

We now collectively have the resources to produce our own Panama Papers. Not just as one offs, but as regular accountability checks to those in situations of power. This is especially the case if we combine our information to create further links.

One example of this democratization of information is a recent project in Peru called Manolo and its intersection with the Panama Papers. Manolo used the webscraping of open data to collect information on Peruvian government officials and lobbyists.

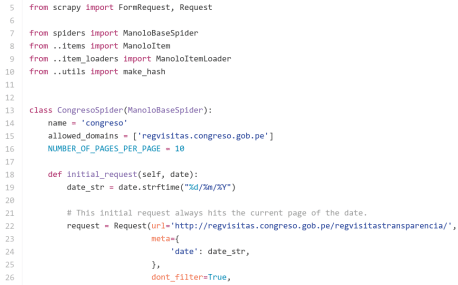

Manolo is a web application that uses Scrapy to extract records (2.2 million so far) of the visitors frequenting several Peruvian state institutions. It then repackages the data into an easily searchable interface, unlike the government websites.

Peruvian journalists frequently use Manolo. It has even helped them uncover illegal lobbying by tracking the visits of construction company representatives who are currently under investigation to specific government officials.

Developed by Carlos Peña, a Scrapinghub engineer, Manolo is a prime example of what a private citizen can accomplish. By opening access to the Peruvian media, this project has opened up a much needed conversation about transparency and accountability in Peru.

With leaks like the Panama Papers as a starting point, web scraping can be used to build datasets to discover wrongdoing and to call out corrupt officials.

For example, you could cross-reference names and facts from the Panama Papers with the data that you retrieve via web scraping. This would give you more context and could lead to you discovering more findings.

We actually tested this out ourselves with Manolo. One of the names found in the Panama Papers is Virgilio Acuña Peralta, currently a Peruvian congressman. We found his name in Manolo’s database since he visited the Ministry of Mining last year.

According to the Peruvian news publication Ojo Público, Acuña wanted to use Mossack Fonseca to reactivate an offshore company that he could use to secure construction contracts with the Peruvian state. As a congressman, this is illegal.;

[Social9_Share class=”s9-widget-wrapper”]

Upcoming Events

Evolving Your Data Architecture for Trustworthy Generative AI

18 April 2024

5 PM CET – 6 PM CET

Read MoreShift Difficult Problems Left with Graph Analysis on Streaming Data

29 April 2024

12 PM ET – 1 PM ET

Read More