HPE and BlueData − A Game-Changing Combination for Big Data

- by 7wData

Last week, at the Strata + Hadoop World conference, an estimated 7,000 people descended upon New York City to hear about the latest and greatest in Big Data, Artificial Intelligence, and the Internet of Things. I enjoy this conference for two main reasons: it’s a great opportunity to meet with dozens (if not hundreds) of enterprise customers and partners all in one place; and it’s an opportunity to learn about new innovations and lessons learned from experts in the field.

Hands down, the highlight of my week was a roundtable and dinner (sponsored by BlueData and Intel) attended by Big Data executives from organizations including ADP, Bank of America, Cornell University, Fidelity, GlaxoSmithKline, JPMorgan Chase, Nasdaq, USAA, and other Fortune 500 enterprises. Albeit completely un-scientific and statistically irrelevant, there were three common themes I heard at the beginning of our roundtable discussion:

This really wasn’t a surprise. I’ve been reading the prognostications from Gartner’s Merv Adrian, Forrester’s Mike Gualtieri, and ESG’s Nik Rouda echoing similar points. And here at BlueData, I’ve written in the past about the complexity of Big Data deployments.

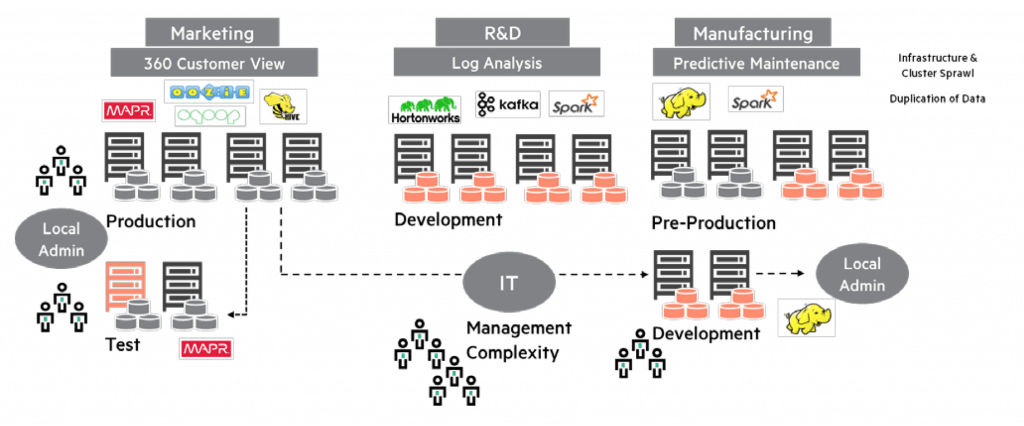

The graphic below illustrates the traditional bare-metal approach for on-premises Big Data deployments. It often takes several weeks to provision the systems and infrastructure required for each new cluster. There may be multiple clusters containing mostly the same data, leading to cluster sprawl and massive data duplication. And all this leads to management challenges on multiple fronts – including data management and governance, as well as the lack of skilled resources to manage each cluster.

However, the really good stuff started to come a little later in the roundtable discussion that evening in New York. Three related themes became even louder and clearer:

These themes are provocative, but they do indicate the sentiment within enterprises today. At the end of the day, the Big Data experts and executives at these enterprises don’t want to see either on-premises or public cloud “win”. What they want is faster time-to-value for their Big Data initiatives (and lower TCO in the process) – while ensuring enterprise-class security, data governance, performance, and scalability.

What do you get when you cross a VMware with an Amazon EMR?

So what does all of this have to with HPE? Well, about five months ago, we first started working with our new friends at HPE. The conversations started in the field, as most good partnerships do: with a goal of providing a compelling joint solution for our enterprise customers.

[Social9_Share class=”s9-widget-wrapper”]

Upcoming Events

Shift Difficult Problems Left with Graph Analysis on Streaming Data

29 April 2024

12 PM ET – 1 PM ET

Read MoreCategories

You Might Be Interested In

4 Data Lake Solution Patterns for Big Data Use Cases

14 Apr, 2018When I took wood shop back in eighth grade, my shop teacher taught us to create a design for our project …

What Is Zero Trust and Will It Change Security Forever?

20 Nov, 2022Zero trust is a new security model initially developed in 2010 by John Kindervag of Forrester Research. The zero trust …

Artificial Intelligence Will Enable 38% Profit Gains By 2035

4 Jul, 2017By 2035 AI technologies have the potential to increase productivity 40% or more. AI will increase economic growth an average …

Recent Jobs

Do You Want to Share Your Story?

Bring your insights on Data, Visualization, Innovation or Business Agility to our community. Let them learn from your experience.

Privacy Overview

Get the 3 STEPS

To Drive Analytics Adoption

And manage change