Big Data No Longer a Big Problem

- by 7wData

Today’s enterprise-level organizations produce ever-increasing volumes of data each day. As employees create new documents, presentations, spreadsheets and more, the amount of stored content grows dramatically over time. This rapid data growth creates a challenging new problem for IT professionals: how to store all the data in the most cost-effective way, while ensuring end-users have immediate access to the information they need.

Many organizations depend on expensive, onsite Network Attached Storage (NAS) systems to house their data. While legacy NAS systems generally meet end-user demand for fast and easy access to regularly used “active” data, approximately 80% of the data stored in expensive NAS solutions is inactive and rarely accessed by end users – if at all. Storing all that “cold” data in “hot” access mediums is both inefficient and extremely expensive. Adding up the cost of necessary data protection, hardware, software, floor space, electrical power, and staff to manage the storage process, each gigabyte of NAS storage costs an organization an estimated $0.75 per month. Over the course of a year, snowballing storage costs result in a very expensive line item at $9,000 per terabyte.

Ideally, an enterprise IT professional could identify and move those cold files easily, placing them in much cheaper internal or external cloud storage costing as little as $0.01 per gigabyte. However, the task of moving the older files from one storage location to another – manually – is a daunting task. An IT professional must face a lot of questions: What is the best way to identify the files to be moved? How often are the files used? What if older files moved out of primary storage are needed? How can all that data be maintained securely regardless of its storage location? How can the process be automated? The Big Data challenge is a big one indeed.

As a solution provider, Equus regularly encounters enterprise customers facing this type of challenge. To deliver a proof-of-concept solution, infinite io turned to Equus for access to cutting edge Intel technology which aided in new product development. In turn, infinite io was able to deliver advanced solutions with a lengthy five-year product life cycle.

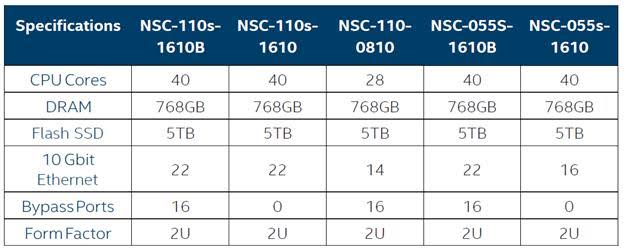

The infinite io team, based in Austin, Texas, have solved these problems by tapping the latest Intel technologies incorporated in the Intel® Server System S2600WT for their new Network-Based Storage Controllers. infinite io’s controller scans metadata to identify the most-used data and documents. Active data is flagged for “hot” onsite storage, providing the fastest access for end-users. The controllers also ease the process of identifying cold data which can be moved to much more cost effective storage, while continuing to make the data readily accessible. The NSC controllers maintain added data security though deep packet inspection, bump-on-a-wire capability, advanced encryption, and access control list (ACL) support.

infinite io chose the Intel® Server System S2600WT after an extensive search for a server product that meets the extreme performance and reliability specifications of their controllers. Each Intel product undergoes a rigorous design and testing process to ensure each element is capable of meeting the high demands of enterprise organizations. On top of this, Intel backs its products with a three-year warranty that includes option to extend coverage to five years. Customers can also count on Intel’s 24/7 support and have confidence in their purchases knowing Intel will replace or refund any product that fails. Similarly, infinite io relies on Equus Onsite Warranty and Support programs in order to tap the benefits of the technical service provider network Equus has established, and expedite parts replacement if necessary.

[Social9_Share class=”s9-widget-wrapper”]

Upcoming Events

Evolving Your Data Architecture for Trustworthy Generative AI

18 April 2024

5 PM CET – 6 PM CET

Read MoreShift Difficult Problems Left with Graph Analysis on Streaming Data

29 April 2024

12 PM ET – 1 PM ET

Read More